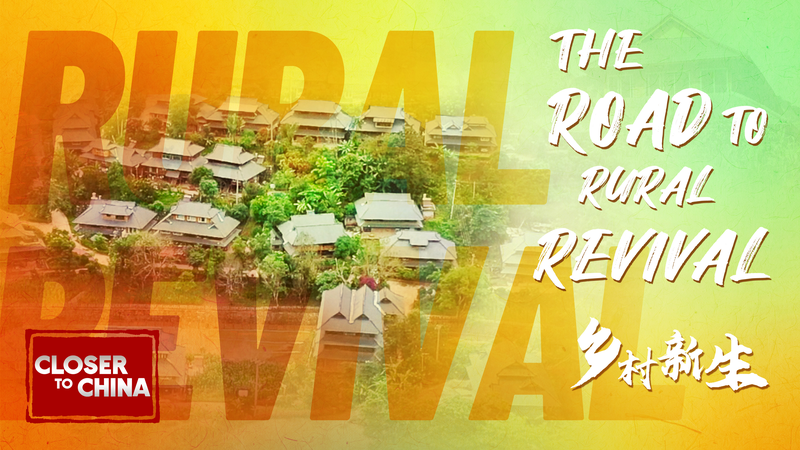

🌄 Tucked deep in the mountains along the China–Laos border, Hebian Village's story reads like a real-life fairytale. Once struggling with poverty, this hidden gem has totally transformed, becoming a beacon of hope for rural communities everywhere. How? By betting on itself!

Local leaders and young, creative entrepreneurs decided the best way forward was to look back—way back to their rich Yao cultural traditions. They've skillfully woven these heritage threads into a vibrant new tapestry of eco-tourism and modern, sustainable businesses. Think homestays nestled in stunning landscapes 🏞️, community-run craft workshops, and agri-tech ventures that connect local products to global markets.

The result? A thriving, self-sustaining village that's proof positive of what community-led vision can achieve. This isn't just about economics; it's about preserving identity while embracing the future. The energy there is contagious!

Now, the big question on everyone's mind in 2026: Can Hebian's blueprint for success be applied elsewhere? 🤔 Experts and development watchers are looking closely. The combination of cultural preservation, environmental stewardship, and entrepreneurial spirit creates a powerful formula that could inspire similar revivals in rural regions across Asia and beyond.

Hebian's journey reminds us that sometimes, the most powerful solutions aren't imported—they're grown right at home, by the people who know the land and their community best. It's a lesson in resilience and innovation that's making waves far beyond its mountain borders.

Reference(s):

cgtn.com